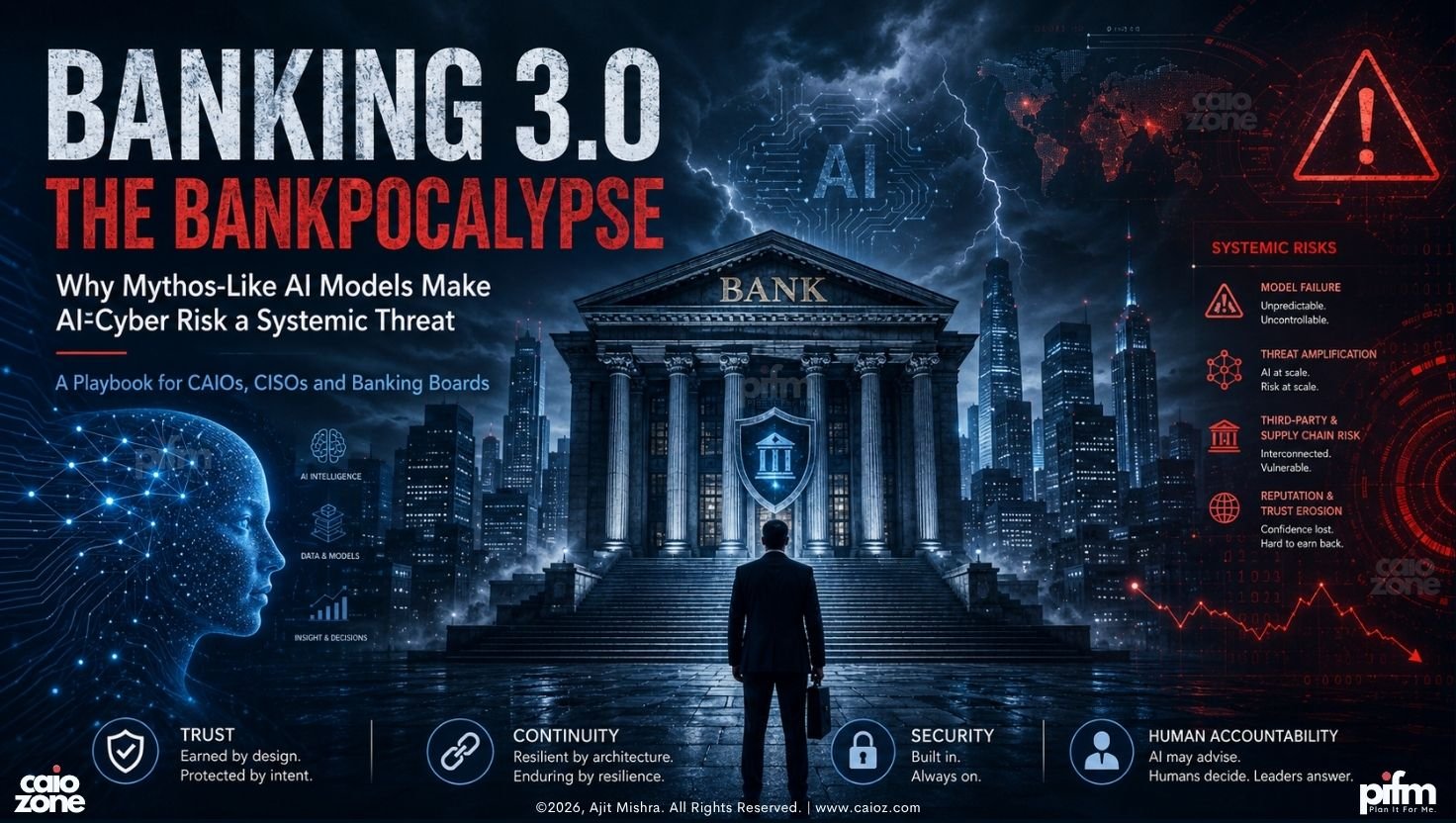

Bankpocalypse is not a prediction of banking collapse. It is a strategic risk scenario for the age of frontier AI, where Mythos-like models, shared banking infrastructure, cyber vulnerability acceleration and public trust anxiety can combine into systemic financial disruption. What CAIOs, CISOs, and Banking Boards Must Do Before AI-Speed Cyber Risk Becomes Systemic?

Author’s Note:

This CAIOz article is the enterprise playbook edition of my Medium essay, Banking 3.0 | The Bankpocalypse. The Medium version carries the longer narrative framing. This CAIOz version focuses on the strategic, architectural, and governance response that banking leaders must now consider.

Table of Contents

The New Question Banking Leaders Must Ask

For years, banking leaders have asked a familiar set of digital transformation questions.

How do we improve customer experience?

How do we make banking more personalized?

How do we use data better?

How do we automate operations?

How do we reduce fraud?

How do we modernize legacy systems?

How do we use Generative AI responsibly?

These questions are still valid. But they are no longer sufficient.

A new question has entered the boardroom.

Can the banking system remain operational under AI-accelerated cyber stress?

That question is uncomfortable. But it is now unavoidable.

Banking has become deeply digital, deeply interconnected and deeply dependent on layers of invisible infrastructure. Mobile banking, UPI, card networks, internet banking, ATMs, APIs, cloud platforms, third-party vendors, identity systems, fraud engines, data platforms and now AI agents all sit inside a complex financial operating environment.

When this environment works, trust becomes invisible.

When it fails, trust disappears quickly.

That is why the emergence of Mythos-like frontier AI models should not be treated merely as a cybersecurity headline. It should be treated as a strategic warning for banks, regulators, boards, CAIOs, CISOs and national financial infrastructure leaders.

Why Mythos Changed the Conversation

Anthropic announced Project Glasswing in April 2026 as an initiative to secure critical software using Claude Mythos Preview, its frontier model focused on advanced cyber capabilities. Anthropic positioned the initiative as a defensive effort, working with major technology and critical infrastructure partners to identify and fix vulnerabilities before adversaries exploit them.

The defensive intent is important. But the capability signal is even more important.

Anthropic’s red-team material described Claude Mythos Preview as strikingly capable at computer security tasks, including vulnerability discovery and exploitation. The UK AI Security Institute also evaluated Claude Mythos Preview and reported major advances in cyber capability, including success on expert-level cybersecurity tasks and multi-step attack simulations.

This does not mean banks should panic. It means banks should prepare.

The real issue is not one model. The real issue is the arrival of a new class of frontier systems that can reason, plan, explore, test and act across digital environments at speeds that traditional governance, security and operational response models were not designed for.

In simple terms, the attacker’s speed is changing.

The defender’s operating model must change too.

Why This Matters Specifically for Banking

Banking is not just another industry. Banking is trust infrastructure.

A bank does not only store money. It stores confidence. It enables commerce. It supports salaries, supply chains, trade, credit, savings, mobility, healthcare payments, government transfers and daily household transactions.

When banking systems fail, the impact does not remain inside a data center. It moves into society.

A failed banking app creates irritation. Failed payments create anxiety. Failed ATMs create panic. Failed payment networks create economic friction. Multiple failures across financial institutions can create trust contagion.

This is why AI-led cyber risk in banking must be viewed differently from ordinary enterprise IT risk.

It has systemic consequences.

India and the World Are Already Moving

India has already taken note. Union Finance Minister Nirmala Sitharaman chaired a high-level meeting with banks and key stakeholders to assess threats linked to recent AI model developments, including the possible misuse of such technologies to weaponize software vulnerabilities. The meeting involved senior stakeholders including representatives from the banking system, RBI, NPCI and CERT-In, with banks being advised to strengthen IT systems and share threat intelligence in real time.

Punjab National Bank has also increased its cybersecurity allocation. Reuters reported that PNB earmarked around 20% of its technology budget, approximately ₹7–8 billion, for cybersecurity in the current financial year, more than 50% higher than the previous year.

This concern is global. Reuters reported that major U.S. banks are urgently addressing vulnerabilities identified by Mythos, with some institutions accelerating patching and cyber upgrades within days rather than traditional timelines.

Japan’s banking regulator has also moved to form a public-private working group to evaluate risks from Mythos-powered cyber threats, and Germany’s BaFin is preparing targeted inspections due to substantial AI-related cyber risks in the financial sector.

The signal is clear.

Governments are not declaring collapse. Regulators are not spreading fear. Banks are not shutting down. But serious institutions are moving because the risk curve has changed.

What Is the Bankpocalypse?

The Bankpocalypse is not a dramatic claim that every bank will collapse overnight.

It is a risk scenario.

It describes a condition where AI-accelerated vulnerability discovery, shared infrastructure exposure, delayed remediation, weak governance and public trust anxiety combine into a systemic financial disruption.

The Bankpocalypse may not begin as one giant event. It may begin as a chain reaction.

A shared vendor vulnerability.

A compromised identity layer.

A payment switch disruption.

A cloud misconfiguration.

A poisoned software update.

An API gateway compromise.

A rogue agentic workflow.

A deepfake-enabled executive approval fraud.

A social media rumor that spreads faster than official clarification.

In banking, a technical issue can become an operational issue. An operational issue can become a customer trust issue. A customer trust issue can become a market confidence issue.

That is the real danger.

The Three Dangerous Pathways

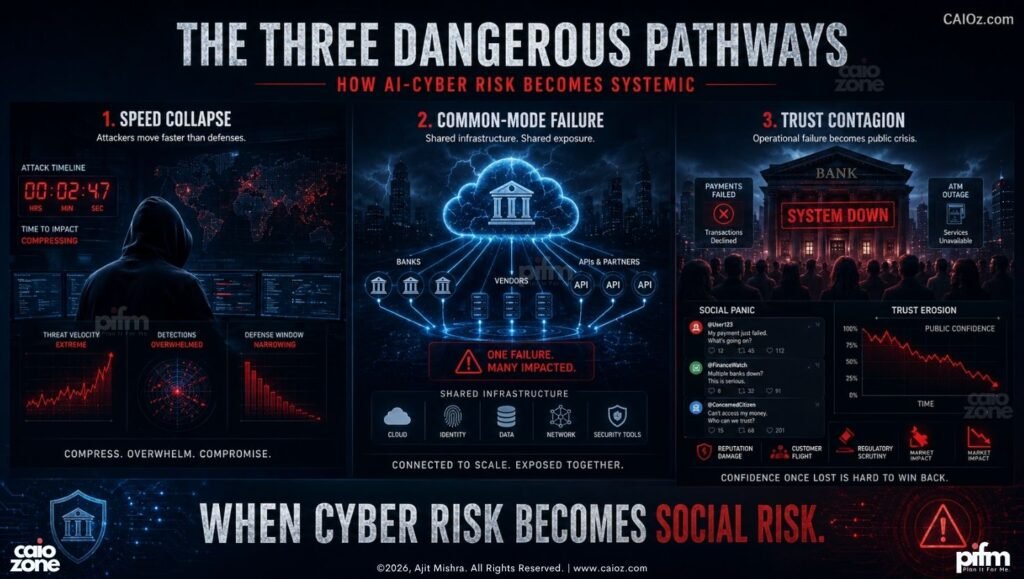

In my view, Mythos-like models create three dangerous pathways for the banking sector.

The first is speed collapse. Traditional attackers needed time for reconnaissance, exploit development, privilege escalation and lateral movement. AI can compress parts of this cycle. A bank that detects threats in hours may soon face adversaries acting in minutes. That mismatch is dangerous because banking security operations are still governed by human queues, approval layers, vendor dependencies and crisis rooms that often move slower than the threat.

The second is common-mode failure. Banks share infrastructure patterns. They use common cloud platforms, common vendors, common security tools, common identity providers, common open-source libraries, common API standards and common implementation partners. Standardization improves efficiency, but it also creates shared exposure. If a critical vulnerability exists in a common layer, the impact may not remain isolated to one institution.

The third is trust contagion. If one bank’s digital channels fail, customers worry. If multiple institutions face failures, customers panic. If payments fail, panic moves from mobile screens to petrol pumps, hospitals, kirana stores, railway counters and ATMs. Social media then becomes an accelerant. In a banking crisis, rumor often travels faster than verified information.

This is where cyber risk becomes social risk.

The Bankpocalypse Remediation Playbook

The response cannot be another dashboard, another policy document or another innovation committee.

Banks need an AI-era resilience operating model.

Here is the playbook.

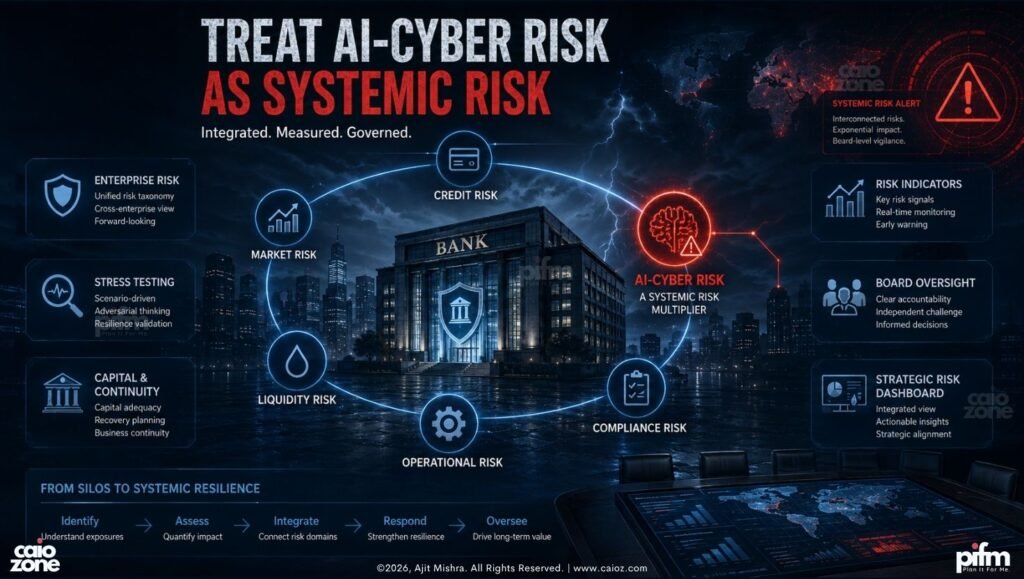

1. Treat AI-Cyber Risk as Systemic Risk

Banks already understand credit risk, market risk, liquidity risk, operational risk and compliance risk. Now they must formally recognize AI-accelerated cyber risk as systemic risk.

This cannot remain buried inside the cybersecurity department.

AI-cyber risk must be brought into enterprise risk management, board risk committee discussions, stress-testing exercises, capital-continuity planning and regulatory reporting. It needs defined indicators, escalation thresholds, ownership models and board-level review.

A Mythos-like capability is not merely a technical threat. It is a strategic risk to continuity, trust and institutional credibility.

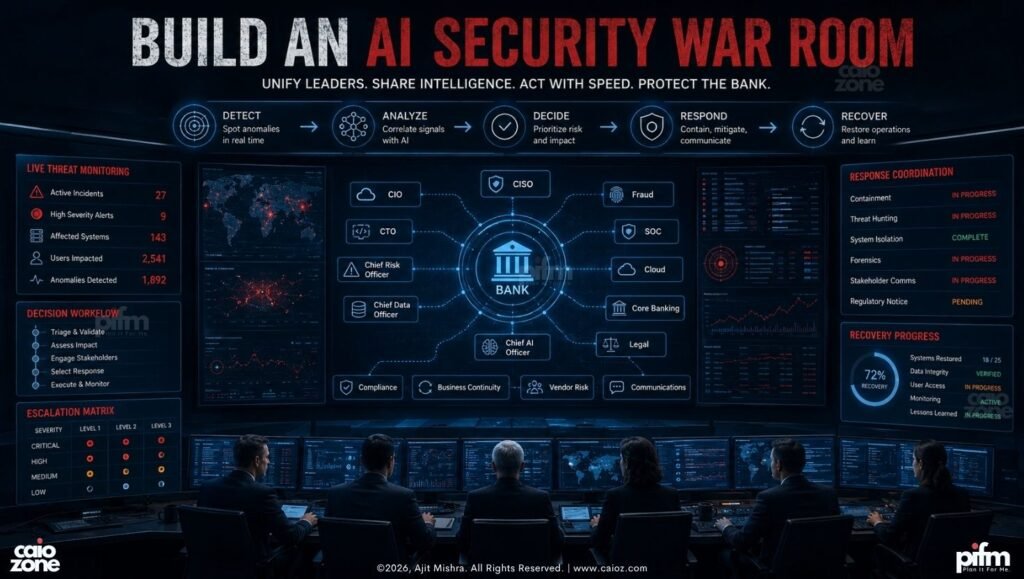

2. Build an AI Security War Room

Every major bank should create an AI Security War Room.

This should not be a symbolic group that meets after an incident. It must be an operating nerve center that brings together the CISO, CIO, CTO, Chief Risk Officer, Chief Data Officer, Chief AI Officer, fraud leaders, SOC leaders, cloud architects, core banking architects, legal, compliance, business continuity, vendor risk and communications teams.

Its purpose is simple.

See faster. Decide faster. Coordinate faster. Recover faster.

In an AI-speed threat environment, slow governance becomes a vulnerability.

3. Separate the Cognitive Layer from the Transaction Layer

Banking 3.0 will increasingly depend on AI agents, RAG systems, decision engines and cognitive workflows. But the cognitive layer must not be given uncontrolled access to the transaction layer.

The “brain” of the bank must not have unrestricted access to the “vault” of the bank.

AI agents may advise, summarize, detect, recommend and prepare actions. But high-risk execution must remain gated. Fund transfers, account freezes, customer data changes, firewall changes, privileged access modifications, large-value corporate transactions and production deployments must require deterministic controls.

Future banking architecture must clearly separate the thinking layer, recommendation layer, workflow layer, approval layer, execution layer and ledger layer.

This is not bureaucracy. This is survival architecture.

4. Apply Zero Trust to Humans, Machines, APIs and Agents

Zero Trust cannot remain a slide in a board presentation.

Every user, device, workload, API, service account, model endpoint, data pipeline, plugin and AI agent must be treated as untrusted until verified.

An AI agent calling an internal API should not be treated as a friendly internal user. It should be authenticated, authorized, rate-limited, logged, monitored and constrained by policy.

Banks must know who is calling, what is calling, why it is calling, what data is being accessed, what action is being requested, whether the timing is normal, whether the volume is normal and whether the intent is consistent with policy.

Without this, Agentic AI becomes a privileged insider with weak supervision.

That is unacceptable.

5. Create AI-Native Red Teams

Annual penetration testing will continue for compliance. But it cannot be the primary assurance model for Banking 3.0.

Banks need AI-native red teams that continuously test applications, infrastructure, agent workflows, RAG retrieval boundaries, prompt injection risks, tool misuse, API abuse, identity escalation, cloud misconfiguration, vendor exposure and data exfiltration paths.

The red team must use AI. The blue team must use AI. The purple team must use AI.

You cannot fight machine-speed adversaries with spreadsheet-speed assurance.

6. Build a Sector-Level Threat Intelligence Grid

No bank can fight this alone.

AI-era financial threats can spread across shared infrastructure, vendors, payment networks and market systems. The response must also be shared.

India needs a financial-sector AI threat intelligence grid connecting banks, RBI, NPCI, CERT-In, IBA, SEBI, market infrastructure institutions and critical vendors.

Such a grid should support real-time vulnerability alerts, shared indicators of compromise, AI-specific attack-pattern libraries, coordinated patch advisories, sector-level drills and emergency response protocols.

The Finance Ministry’s emphasis on threat intelligence sharing is the right direction. The next step is execution with speed and accountability.

7. Define a Minimum Viable Banking Continuity Plan

Every country needs to answer a difficult question.

If digital banking fails for 24 hours, what remains operational?

This is not an argument against digital banking. Digital banking is irreversible. But digital-only thinking can become fragile if fallback systems are weak.

Banks must define a Minimum Viable Banking Continuity Plan. During a severe systemic outage, essential financial services should still remain available through controlled fallbacks.

This may include limited cash withdrawal windows, emergency branch operations, offline customer verification, priority payment rails for hospitals, fuel, food and public services, emergency liquidity coordination and clear public communication protocols.

Resilience does not mean avoiding every failure. Resilience means ensuring that failure does not become collapse.

8. Govern AI Models Like Financial Infrastructure

A powerful AI model used in banking should not be treated like a normal software tool.

It should be governed like critical financial infrastructure.

Banks must demand model provenance, capability disclosure, cyber-risk evaluation, red-team results, data-boundary guarantees, incident reporting commitments, logging standards, model behavior monitoring, kill-switch mechanisms, exit strategies, jurisdictional clarity and vendor liability frameworks.

The more powerful the model, the more serious the governance.

9. Install Human Kill Switches for High-Risk Automation

Automation is powerful. Blind automation is dangerous.

Every bank must define high-risk action thresholds. Large-value fund movement, bulk account freeze, privileged access grant, firewall rule change, production deployment, customer data export, AI-agent policy modification and third-party integration activation should require deterministic human approval.

Banks must also build technical kill switches that can disconnect AI systems from execution environments during abnormal behavior.

The kill switch should not be philosophical. It should be technical, tested, audited and known to the right people.

In the age of autonomous systems, human authority over irreversible actions must remain intact.

10. Create the Chief AI Security Officer Role

The banking sector needs a new leadership role: the Chief AI Security Officer.

This is not a rebranded CISO. The CAISO must sit at the intersection of AI architecture, cybersecurity, model risk, data governance, cloud security, compliance, business continuity, vendor risk and agentic workflow control.

The CAISO should work closely with the CAIO, CISO, CIO, CRO and board risk committee. Most importantly, the CAISO must have veto power over unsafe AI deployments.

In Banking 3.0, ambition without AI security becomes recklessness.

The Role of the CAIO

The Chief AI Officer cannot be only the ambassador of innovation.

In Banking 3.0, the CAIO must become the architect of safe intelligence.

The CAIO must ask uncomfortable questions. Are our AI agents observable? Are they governed? Can they access sensitive systems? Can they trigger actions? Can they be manipulated? Can they leak data? Can they be shut down? Can they be audited? Can they be trusted under stress?

A weak CAIO will chase demos. A strong CAIO will build durable operating models.

This is the difference between AI theatre and AI transformation.

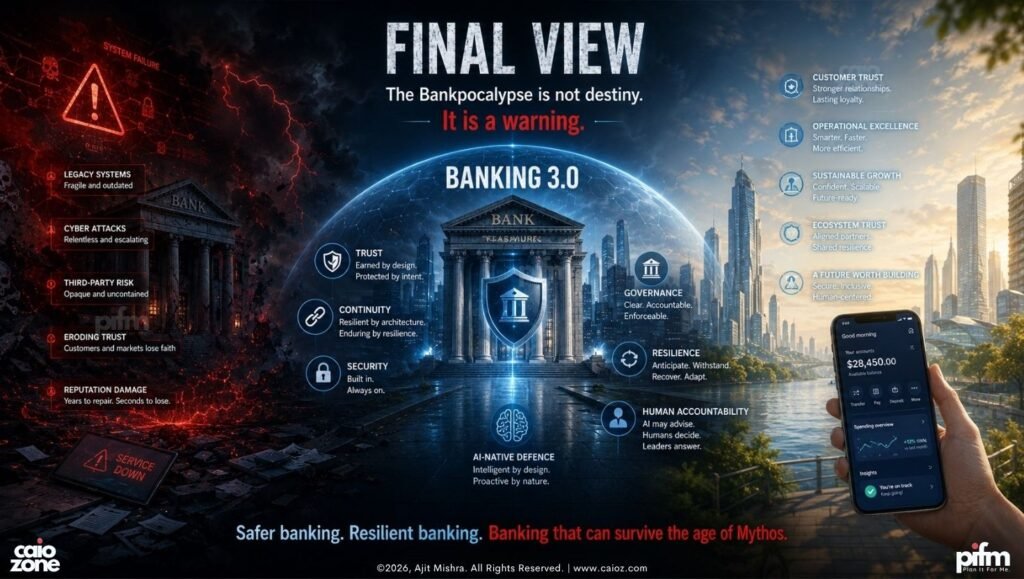

Final View

The Bankpocalypse is not destiny. It is a warning.

Mythos is not the villain of the story. Mythos is the mirror. It is showing the banking world what is coming.

The real issue is not one model. The real issue is a new class of frontier systems that can reason, plan, explore, test and act across digital environments.

Banks must not respond with fear. They must respond with architecture, governance, resilience, board-level seriousness, national coordination and AI-native defence.

Banking 3.0 cannot be built only on intelligence. It must be built on trust, continuity, security and human accountability.

The future of banking will not be decided by who adopts AI first.

It will be decided by who adopts AI safely, deeply and structurally.

Because at the end of the day, when a customer opens a banking app, the app must work. The ATM must work. The payment must work. The bank must work. The country must work.

That is the real promise of Banking 3.0.

Not merely smarter banking.

Safer banking. Resilient banking. Banking that can survive the age of Mythos.

Read the Companion Essay on Medium

This CAIOz article is the enterprise playbook version. For the longer narrative essay, including the Bankpocalypse opening and broader Banking 3.0 framing, read the Medium version here: